From running services to managing them#

In the previous post I walked through my single-node Proxmox setup – the hardware, every VM and container, and 40+ self-hosted services spread across 23 Docker Compose stacks. This post is about the part that comes after: actually managing all of that without losing your mind.

How the GitOps pipeline works#

First off, I owe a lot of this setup’s foundation to Nick Cunningham’s excellent guides on setting up Forgejo and automating Docker updates with Renovate and Komodo. If you’re thinking about building something similar, start there. This is a Kubernetes-less setup with a GitOps approach – plain Docker Compose all the way down, orchestrated through git and Komodo rather than k8s. Some people prefer Kubernetes for this kind of thing, and that’s totally valid, but I like the simplicity of Compose and wanted to see how far I could push it.

All of those Docker Compose files live in a single git repository hosted on my self-hosted Forgejo instance. The repo structure is straightforward – one directory per server, one subdirectory per stack:

{server}/{stack}/docker-compose.yml

...

debian-host/caddy/docker-compose.yml

debian-host/authentik/docker-compose.yml

debian-host/paperless-ngx/docker-compose.yml

...

immich/immich-app/docker-compose.yml

adguard/adguard/docker-compose.ymlEach stack also has a .gitignore that always excludes .env (secrets stay on the server, never in git) and usually excludes data directories too.

The deployment flow works through Komodo, which acts as a build and deployment tool on top of Docker Compose. Every server runs a Komodo Periphery agent with a local clone of the repo. When I merge a PR, Komodo automatically pulls the changes to the right server and the stack updates itself.

Renovate handles automated Docker image updates, creating PRs whenever a new version is available.

This pipeline handles the deployment side of things really well. But there’s a whole category of tasks it doesn’t touch: adding DNS entries in AdGuard, configuring OAuth2 providers in Authentik, checking container health in Komodo, inspecting VM resources in Proxmox. Each of those means opening a different web UI, clicking through forms, and hoping you didn’t forget a step. For a single new service, I might touch four or five different admin panels.

What is MCP and why use it for homelab automation#

MCP is an open standard, but the way I think about it is simpler: MCP servers are API wrappers shaped for LLM consumption. They describe what tools are available, what parameters they take, and when to use them – structured so an AI can invoke your APIs on your behalf, with your permission, using your credentials, without having to parse raw API docs.

I use Claude Code as my terminal-based AI coding assistant. It already knows how to read files, run commands, and write code, and its agentic loop is mature enough to handle complex multi-step tasks reliably. The idea was straightforward: what if it could also talk directly to my homelab services? I could have written shell scripts that curl these APIs, but the value of MCP is that the AI can compose tools dynamically based on context – it decides which APIs to call and in what order based on what I’m asking for, rather than me hardcoding every possible workflow.

I built four MCP servers, one for each core API in my infrastructure:

- AdGuard Home – DNS management. Adding and removing DNS rewrites for

*.samik.devdomains, which is something I do every time I add a new service. - Authentik – Identity and SSO. Creating OAuth2 providers, configuring OIDC for new applications, managing users and groups.

- Komodo – Container orchestration. Listing stacks, deploying updates, checking logs, managing resources across all five servers.

- Proxmox VE – Virtualization. Checking node health, listing VMs and LXCs, inspecting resource usage.

What this enables in practice: “add a new service” becomes a single conversation. Claude creates the Docker Compose file, adds the Caddy reverse proxy entry, creates a DNS rewrite via the AdGuard MCP, configures SSO via the Authentik MCP, and opens a PR – all without me leaving the terminal or opening a single web UI.

Building 4 custom MCP servers for homelab management#

I used Claude Code itself to build all four MCP servers – the AI helped build the tools that let it manage my infrastructure.

Architecture#

All four servers are TypeScript, built on the official @modelcontextprotocol/sdk. A consistent architecture emerged across them – partly because I kept asking Claude to follow the same patterns, and partly because the patterns just made sense. Every server’s entry point follows the same startup sequence:

// Every MCP server's index.ts follows this pattern

async function main(): Promise<void> {

const config = loadConfig(); // Read env vars, validate

const client = createClient(config); // Create API client

await validateConnection(client); // Health check the target service

const server = createServer(); // Create MCP server instance

registerAllTools(server, client, config); // Register all tool handlers

await startServer(server, config); // Start stdio or HTTP transport

}Each server follows the same directory layout:

src/

core/

config.ts # Env var loading and validation

client.ts # API client setup

server.ts # MCP server instance creation

tools.ts # Tool registration helpers

errors.ts # Error handling utilities

logger.ts # Logging (never to stdout!)

tools/

stacks.ts # (Komodo) or users.ts (Authentik), etc.

servers.ts # Tool handlers organized by domain

deployments.ts

...Komodo has stacks.ts, servers.ts, deployments.ts, builds.ts; Authentik has users.ts, groups.ts, applications.ts, providers.ts, tokens.ts. If you’ve seen one, you roughly know how the others work.

All four are published to npm under @samik081/* and as Docker images on GHCR (ghcr.io/samik081/*), with multi-arch builds for both linux/amd64 and linux/arm64. Each server lives in its own git repo on GitHub, and I aggregate them via git submodules in a private mcp-servers monorepo hosted on my Forgejo instance. The monorepo makes it easy to modify and manage all four servers at once – or spin up a new one following the same patterns and conventions. It’s also where the main CLAUDE.md lives, the one that covers the unified architecture, shared rules, and development workflow across all the servers.

Safeguards and context management#

One thing I’m particularly happy with is the access tier system and the category allowlisting mechanism. AdGuard and Authentik support two access tiers (read-only and full access), while Komodo and Proxmox support three (read-only, read-execute, and full). This means I can give Claude read access to everything without worrying that a misunderstood request will accidentally tear down a running stack.

On top of that, each server supports category allowlisting – you can configure exactly which groups of tools get registered (say, just DNS rewrites from AdGuard, or just stack management from Komodo) so the agent only sees the tools relevant to the task at hand. This idea is something I’m pretty proud of, because context management turns out to be critical when working with agents. Every MCP tool you register adds to the agent’s context, and bloated context means worse performance, higher costs, and more confused responses. The access tiers and category allowlisting together keep things tight. The tool registration wrapper handles this transparently:

export function registerTool(

server: McpServer, config: AppConfig, options: ToolRegistrationOptions

): boolean {

// Access tier gate: skip tools above the configured tier

if (TIER_LEVELS[config.accessTier] < TIER_LEVELS[options.accessTier]) {

logger.debug(

`Skipping tool "${options.name}" (requires ${options.accessTier})`,

);

return false;

}

// Category allowlist: skip tools outside the allowed categories

if (

config.categories !== null &&

!config.categories.includes(options.category)

) {

logger.debug(

`Skipping tool "${options.name}" (category "${options.category}" not in allowed categories)`,

);

return false;

}

// ... register with annotations, error handling

}Tools that require a higher access tier, or belong to a category that isn’t allowlisted, simply don’t get registered. Claude never even sees them.

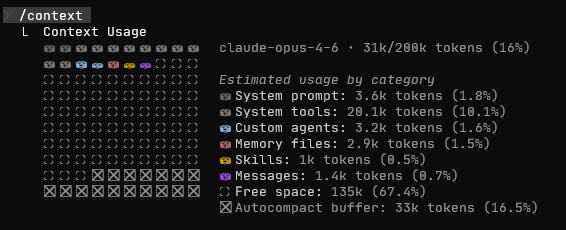

/context command that visualizes exactly what’s consuming your context window – system prompt, tools, memory files, messages, and more. It’s a great way to see where your tokens are going and whether your context budget is being spent wisely.Here’s what it looks like with all four of my MCP servers loaded with fairly broad category allowlisting:

Notice that System tools – which includes all registered MCP tool definitions – takes up 20.1k tokens (10.1% of the context window). Compare that to Memory files (which includes CLAUDE.md) at just 2.9k tokens (1.5%). MCP tools are eating roughly 7x more context than the entire project runbook. That’s why I built the category allowlisting – if you’re doing DNS work, you don’t need Proxmox VM tools burning 5,000 tokens of context for nothing.

Using CLAUDE.md as a homelab runbook#

Claude Code reads a file called CLAUDE.md at the start of every conversation. It’s the mechanism for giving the AI persistent context about your project – conventions, architecture, rules, whatever you need it to know. (Other tools like Cursor and Codex use a standardized AGENTS.md file for the same purpose. Some people symlink the two, or reference AGENTS.md from inside CLAUDE.md so both tools pick up the same context.)

I went a bit overboard with mine. The homelab-compose CLAUDE.md is a 200+ line operational runbook. It’s got an architecture table mapping servers to IPs and stacks, Docker Compose conventions (env_file patterns, restart policies, image tag rules), Caddy reverse proxy templates for both HTTP and HTTPS backends, and a step-by-step checklist for adding a new service.

That checklist is probably the most important part:

## Adding a New Service

1. **Ask the user** which server to deploy to -- NEVER guess this

2. User provides compose file content OR a link to the app's docs

3. User provides the domain (e.g., `myapp.samik.dev`)

4. Create `{server}/{stack-name}/docker-compose.yml`

5. Create `{server}/{stack-name}/.gitignore` (minimum: `.env`, plus any data dirs)

6. Add Caddy reverse proxy entry to `debian-host/caddy/conf/Caddyfile`

7. Add DNS rewrite via AdGuard MCP: `rewrites_add` with `{name}.samik.dev` -> `10.21.37.40`

8. If the app supports OIDC/SSO, configure Authentik as the identity provider

9. Commit, push branch, open PR via `tea` CLI (`tea` is Forgejo's CLI, like `gh` for GitHub)

10. After merge: Komodo auto-pulls to the server

11. User creates `.env` on server via SSH

12. Create Komodo Stack resource via MCPThis routine is also a perfect candidate for a Claude Code custom command – basically a reusable skill/plugin that encodes the whole workflow so I wouldn’t even need to spell it out each time. I’m planning to build that and test it the next time I add a service.

The rules section is equally specific. “NEVER guess which server a service should be deployed to – always ask.” “NEVER deploy/start a stack via Komodo until the PR is merged.” These exist because I can imagine what would happen if an AI made assumptions about infrastructure. The stacks use files_on_host, which means the server needs the actual files from a git pull before Komodo can do anything with them. Deploying before merge means deploying files that don’t exist yet.

The monorepo’s CLAUDE.md is another 9000+ characters, covering critical rules like “never use console.log – stdout is the MCP JSON-RPC transport,” the git workflow (GitHub as primary, Forgejo as mirror), and the release process.

These are operational manuals written for an AI agent, not documentation written for humans.

/claude-md-management:revise-claude-md command is pretty helpful for keeping CLAUDE.md up to date – I run it often after sessions and it captures learnings from the conversation and suggests updates. This comes from Anthropic’s stock claude-md-management plugin, which you can add to your own setup by running /plugins inside Claude Code.Keeping CLAUDE.md focused#

Since CLAUDE.md is taking up tokens before you’ve even typed your first message, you want the stuff in there to pull its weight. If the agent would’ve needed to look something up anyway (project conventions, architecture decisions, common gotchas), front-loading it in CLAUDE.md saves a round trip. Same goes for things that would take the agent several iterations to figure out on its own – like which server a service belongs on, or which port range is already in use. That’s the sweet spot.

For things that are more niche, only come up occasionally, or need lengthy documentation – say, the exact steps to rotate an Authentik API token, or the specific flags for a one-off migration script – I’ve found it’s better to keep those in separate docs and just mention their existence in CLAUDE.md. Something like “see docs/token-rotation.md for the Authentik token refresh process.” The agent will go read that file when it actually needs it, and the rest of the time it’s not eating context for no reason.

My MCP monorepo CLAUDE.md clocks in at around 9KB, roughly 2,500 tokens, and that’s felt like a reasonable size for what it covers. But honestly, this is all pretty new territory – I don’t think anyone has a definitive answer on the “right” size yet. It probably varies a lot depending on how complex your project is and how often you’re switching between different types of tasks. I’d say start with what you find yourself repeatedly explaining to the agent, put that in CLAUDE.md, and iterate from there.

Custom slash commands for automated deployment#

Claude Code supports custom slash commands – markdown files in .claude/commands/ that define reusable workflows you can invoke with a / prefix. I built a /deploy family for releasing MCP server updates:

/deploy <repo> <patch|minor|major>– creates a PR, pauses for me to review, then merges and releases after I approve/deploy-auto <repo> <patch|minor|major>– same thing but auto-merges without pausing/deploy-all <patch|minor|major>– deploys all repos that have uncommitted changes, creating PRs in parallel/deploy-all-auto– full auto mode across every repo

What /deploy actually does under the hood is a pretty involved sequence: it detects changes via git status, analyzes the diffs to generate a descriptive commit message and PR description, creates a feature branch, commits, pushes to GitHub, creates a PR via gh pr create, optionally pauses for human review, squash-merges the PR, pulls main, mirrors to Forgejo, runs npm version <bump>, pushes tags, creates a GitHub release, and finally updates the parent repo’s submodule pointer. The /deploy commands use gh because the MCP server repos live on GitHub, while the homelab-compose repo lives on Forgejo (hence tea for PRs there).

The -all variants are where it gets fun. They launch parallel subagents – one for each repo with changes – so a single /deploy-all patch can create, merge, and release four packages simultaneously. What would be dozens of manual commands across four repos becomes one slash command and a few seconds of waiting.

Here’s what the /deploy-all command file actually looks like – also written by Claude Code, naturally. It’s just a markdown file that Claude Code interprets as a multi-phase workflow:

---

description: Deploy all repos with uncommitted changes (manual merge — pauses for PR review)

argument-hint: "<patch|minor|major>"

allowed-tools:

- Bash

- Task

- AskUserQuestion

- Read

- Glob

- Grep

---

You are deploying all MCP server repos that have uncommitted changes.

This command creates PRs for review, then after user approval merges and releases.

## Input Validation

The version bump type is: `$ARGUMENTS`

If `$ARGUMENTS` is empty or not one of `patch`, `minor`, or `major`, immediately

stop and tell the user:

> Usage: `/deploy-all <patch|minor|major>`

## Repos

The four repos are subdirectories of the monorepo:

- `mcp-adguard-home`

- `mcp-authentik`

- `mcp-komodo`

- `mcp-pve`

## Phase 1: Detect Changes

For each repo, run `git status --porcelain` to detect uncommitted changes.

Collect the list of repos that have changes. If no repos have changes,

tell the user and stop.

## Phase 2: Analyze Changes & Generate Descriptions

For each changed repo, read its full diff. Also check for untracked files.

**Determine whether to use shared or per-repo descriptions:**

- If the diffs are **functionally identical or parallel** across all changed repos,

generate a **single shared description** used for all repos.

- If the diffs **differ meaningfully** between repos, generate **independent

per-repo descriptions**.

For each description, generate:

- **Commit message**: conventional-commit-style

- **Branch name**: derived from commit message in kebab-case

- **PR title**: same as commit message

- **PR body**: detailed description with `## Summary` and bullet points

Present all descriptions to the user. Use `AskUserQuestion` with options:

- "Looks good, proceed"

- "Let me edit"

## Phase 3: Create PRs (parallel)

Launch one **subagent per repo**, running in **parallel**. Each subagent:

1. Create feature branch

2. Stage and commit all changes

3. Push to GitHub

4. Create PR via `gh pr create`

5. Return the PR URL

## Phase 4: Present PRs for Review

Collect all PR URLs. Present them to the user:

> **PRs created — please review:**

> - `mcp-adguard-home`: <PR URL>

> - `mcp-authentik`: <PR URL>

> ...

Ask: **"Merge all PRs and proceed with release?"**

## Phase 5: Merge & Release (parallel)

Launch one **subagent per repo**, running in **parallel**. Each subagent:

1. Squash-merge the PR on GitHub

2. Switch back to main and pull

3. Mirror to Forgejo

4. Version bump (`npm version $ARGUMENTS`)

5. Push version commit + tag to both remotes

6. Create GitHub release

## Phase 6: Update Parent Submodules

Update the parent repo's submodule pointers for deployed repos.

## Summary

Print a summary: which repos were deployed, new version numbers,

and links to GitHub releases.How it all looks in practice#

Deploying a new service end-to-end#

The best way to explain this is with a real example. Recently I wanted to add BentoPDF – a self-hosted PDF toolkit – to my setup. Here’s what the actual conversation looked like:

> Add BentoPDF to debian-host at pdf.samik.dev

I'll implement the plan. Let me read the Caddyfile and set everything up.

[Read debian-host/caddy/conf/Caddyfile]

[Write debian-host/bentopdf/docker-compose.yml]

Created BentoPDF container on port 8090

[Write debian-host/bentopdf/.gitignore]

[Edit Caddyfile]

Added pdf.samik.dev reverse proxy with COOP/COEP headers

[MCP: adguard → rewrites_add] pdf.samik.dev → 10.21.37.40

Files created and DNS rewrite added. Creating branch and PR...

[git checkout -b feat/bentopdf]

[git commit — 3 files changed, 22 insertions(+)]

[git push -u origin feat/bentopdf]

[tea pr create — PR #188 created]

> Merged

[MCP: komodo → write_resource] Created Stack 'bentopdf' on debian-host

[MCP: komodo → deploy_stack] Deploying bentopdf

[MCP: komodo → get_stack_log] bentopdf | ready for start up

BentoPDF is up and running at pdf.samik.dev.Docker Compose file, Caddy config with the right COOP/COEP headers for browser-based PDF rendering, DNS rewrite, PR, and post-merge deployment – all in one conversation. No tab-switching, no copy-pasting IPs, no forgetting a step in the Caddyfile.

The MCP server development loop itself benefits from the tooling. I make changes to all of the servers (for example, asking Claude Code to add HTTP transport to all of them), type /deploy-all-auto patch, and watch as PRs get created, merged, and released across all four repos. npm packages published, Docker images pushed to GHCR, submodule pointers updated, all from the same terminal session.

Inspecting the infrastructure#

Infrastructure health checks work the same way. Here’s what “how are my servers doing?” actually looks like:

> How are my servers doing?

[MCP: komodo → list_servers] 5 servers, all reporting Ok

[MCP: komodo → get_server_stats] Querying stats for all 5...

All 5 servers are healthy:

Server CPU Memory Disk Load

debian-host 56.2% 10.7/19.4 GB 81.1/243.5 GB 2.34

media-server 3.2% 3.4/5.8 GB 12.4/62.8 GB 0.27

nextcloud 7.1% 3.1/5.8 GB 12.1/30.4 GB 0.19

immich 1.8% 2.3/7.7 GB 10.7/30.4 GB 0.27

adguard 2.3% 1.2/3.8 GB 19.9/30.5 GB 0.00

debian-host is the busiest at 56% CPU with moderate load.

adguard has the highest disk usage at 65% — worth keeping

an eye on, but nothing urgent.Or sometimes you just want a quick visual overview:

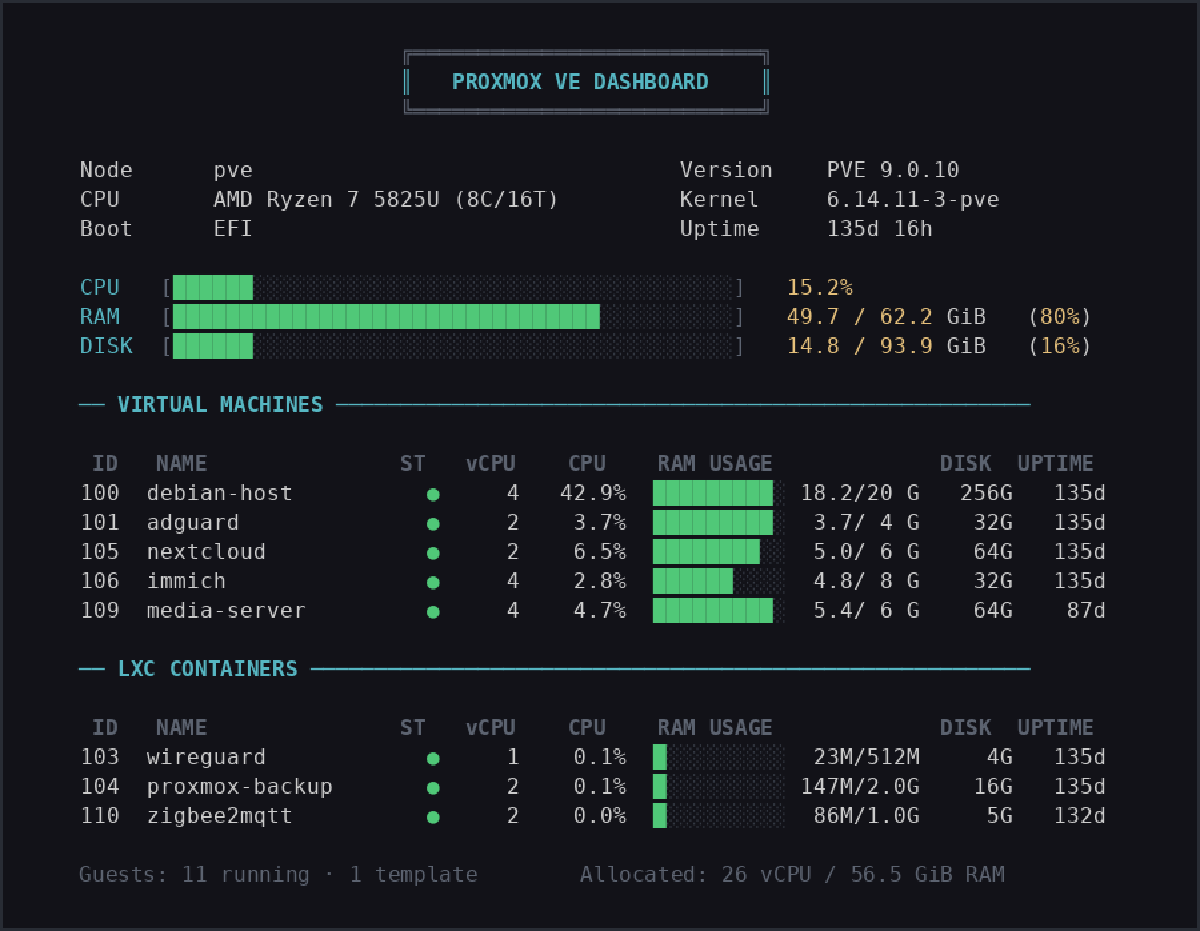

> Print beautiful, clear ASCII dashboard from my proxmox node/VMs/LXCs

[MCP: pve → list_nodes]

[MCP: pve → list_cluster_resources (type: "vm")]

[MCP: pve → get_node_status (node: "pve")]

╔═══════════════════════════╗

║ PROXMOX VE DASHBOARD ║

╚═══════════════════════════╝

Node pve Version PVE 9.0.10

CPU AMD Ryzen 7 5825U (8C/16T) Kernel 6.14.11-3-pve

Boot EFI Uptime 135d 16h

CPU [██████░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░] 15.2%

RAM [████████████████████████████████░░░░░░░░░░] 49.7 / 62.2 GiB (80%)

DISK [██████░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░] 14.8 / 93.9 GiB (16%)

── VIRTUAL MACHINES ──────────────────────────────────────────────────────

ID NAME ST vCPU CPU RAM USAGE DISK UPTIME

100 debian-host ● 4 42.9% █████████░ 18.2/20 G 256G 135d

101 adguard ● 2 3.7% █████████░ 3.7/ 4 G 32G 135d

105 nextcloud ● 2 6.5% ████████░░ 5.0/ 6 G 64G 135d

106 immich ● 4 2.8% ██████░░░░ 4.8/ 8 G 32G 135d

109 media-server ● 4 4.7% █████████░ 5.4/ 6 G 64G 87d

── LXC CONTAINERS ────────────────────────────────────────────────────────

ID NAME ST vCPU CPU RAM USAGE DISK UPTIME

103 wireguard ● 1 0.1% █░░░░░░░░░ 23M/512M 4G 135d

104 proxmox-backup ● 2 0.1% █░░░░░░░░░ 147M/2.0G 16G 135d

110 zigbee2mqtt ● 2 0.0% █░░░░░░░░░ 86M/1.0G 5G 132d

Guests: 11 running · 1 template Allocated: 26 vCPU / 56.5 GiB RAM

All 11 guests running strong with 135+ days uptime. debian-host is the

busiest at 42.9% CPU and 91% RAM usage.Tell me that’s not gorgeous.

Summary#

What’s next#

Reactive monitoring#

Right now, the MCP servers only respond to my requests – I ask Claude to check something, and it does. What I’d really like is for the AI to be notified when something goes wrong: a stack crashes, a disk fills up, a certificate is about to expire. Combining MCP with something like ntfy for push notifications could close that loop, turning this from “AI that manages my homelab when I ask” into “AI that watches my homelab and tells me when something needs attention.”

Grafana integration#

Another thing on my list: querying Grafana or InfluxDB directly from the terminal. Right now, debugging a misbehaving stack means switching to the Grafana web UI, finding the right dashboard, and eyeballing graphs. Being able to ask Claude “show me CPU and memory for the immich stack over the last 24 hours” and get actual data back would make troubleshooting a lot faster. Grafana already has an official MCP server, so this one might be the easiest win yet.

Per-tool allowlisting for MCP servers#

I’m also planning to make the allowlisting more granular – right now it works at the category level (e.g., “stacks” or “DNS rewrites”), but I’d like to support allowlisting or blacklisting individual tools. That way I could expose just the three or four tools I actually need for a given workflow instead of an entire category, squeezing even more out of the context budget.

Why build my own instead of using existing MCP servers?#

Fair question – there are community MCP servers out there for some of these services. But these tools have direct access to my infrastructure: DNS, SSO, containers, VMs. That’s not something I’m comfortable outsourcing to a dependency I don’t fully control. One malicious or compromised update that I pull without checking could do real damage. Building my own means I audit every line of code that touches my homelab.

There’s also a practical reason. I want consistent features across all four servers – allowlisting to restrict which tools are exposed, access tiers to separate read-only monitoring from write operations, uniform error handling, the same config patterns. With third-party servers, you get whatever the maintainer decided to build, and good luck getting four different projects to agree on a common security model.

And honestly, building your own is surprisingly cheap and fast now. I used Claude Code to build all four servers – most of the work was describing what I wanted and reviewing what it produced. The barrier to “just build it yourself” has dropped dramatically, so the trade-off between trusting someone else’s code and writing your own tilts pretty heavily toward writing your own.

The exception would be official MCP servers maintained by the projects themselves – if Proxmox or Komodo shipped their own, I’d probably use those over rolling my own. But for now, none of these services offer one.

Takeaways#

The biggest takeaway, at least for me, is the pattern itself: a CLAUDE.md runbook for giving the AI deep context about your infrastructure, custom MCP servers for giving it API access to your services, and slash commands for codifying multi-step workflows into repeatable one-liners. None of these pieces are complex on their own – the MCP servers are TypeScript wrappers around REST APIs, the CLAUDE.md is a markdown file, the slash commands are prompt templates. But combined, they turn a general-purpose AI coding assistant into something that genuinely understands and can operate your specific infrastructure.

The MCP servers are all open source on GitHub:

- mcp-adguard-home – AdGuard Home DNS management

- mcp-authentik – Authentik identity provider

- mcp-komodo – Komodo container orchestration

- mcp-pve – Proxmox VE virtualization